前文我们了解了ceph的存储池、PG、CRUSH、客户端IO的简要工作过程、Ceph客户端计算PG_ID的步骤的相关话题,回顾请参考https://www.cnblogs.com/qiuhom-1874/p/16733806.html;今天我们来聊一聊在ceph上操作存储池相关命令的用法和说明;

在ceph上操作存储池不外乎就是查看列出、创建、重命名和删除等操作,常用相关的工具都是“ceph osd pool”的子命令,ls、create、rename和rm等;

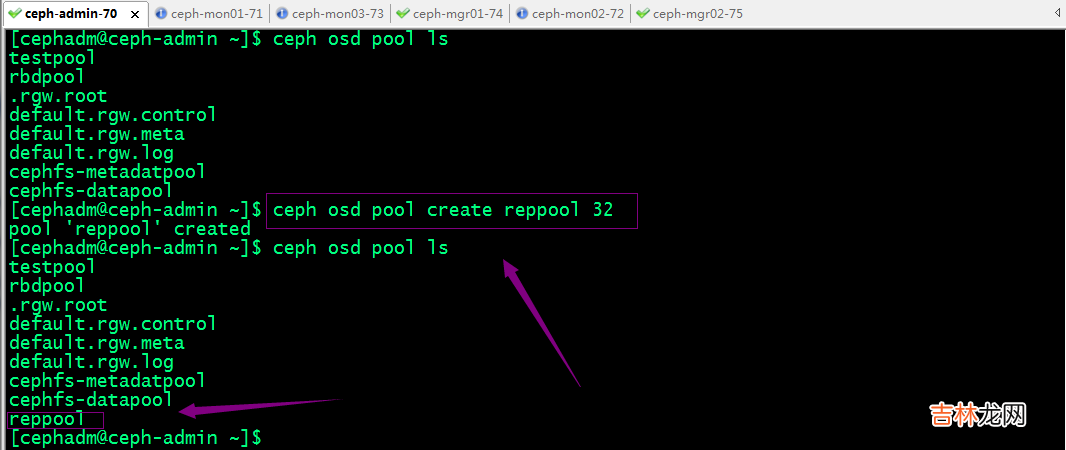

1、创建存储池

副本型存储池创建命令格式

ceph osd pool create <pool-name> <pg-num> [pgp-num] [replicated] [crush-rule-name] [expected-num-objects]提示:创建副本型存储池上面的必要选项有存储池的名称和PG的数量,后面可以不用跟pgp和replicated来指定存储池的pgp的数量和类型为副本型;即默认创建不指定存储池类型,都是创建的是副本池;

纠删码池存储池创建命令格式

ceph osd pool create <pool-name> <pg-num> <pgp-num> erasure [erasure-code-profile] [crush-rule-name] [expected-num-objects]提示:创建纠删码池存储池,需要给定存储池名称、PG的数量、PGP的数量已经明确指定存储池类型为erasure;这里解释下PGP,所谓PGP(Placement Group for Placement purpose)就是用于归置的PG数量,其值应该等于PG的数量; crush-ruleset-name是用于指定此存储池所用的CRUSH规则集的名称,不过,引用的规则集必须事先存在;

erasure-code-profile参数是用于指定纠删码池配置文件;未指定要使用的纠删编码配置文件时,创建命令会为其自动创建一个,并在创建相关的CRUSH规则集时使用到它;默认配置文件自动定义k=2和m=1,这意味着Ceph将通过三个OSD扩展对象数据,并且可以丢失其中一个OSD而不会丢失数据,因此,在冗余效果上,它相当于一个大小为2的副本池,不过,其存储空间有效利用率为2/3而非1/2 。

示例:创建一个副本池

文章插图

示例:创建一个纠删码池

文章插图

2、获取存储池的相关信息

列出存储池:ceph osd pool ls [detail]

[cephadm@ceph-admin ~]$ ceph osd pool lstestpoolrbdpool.rgw.rootdefault.rgw.controldefault.rgw.metadefault.rgw.logcephfs-metadatpoolcephfs-datapoolreppoolerasurepool[cephadm@ceph-admin ~]$ ceph osd pool ls detailpool 1 'testpool' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 16 pgp_num 16 last_change 42 flags hashpspool stripe_width 0pool 2 'rbdpool' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 64 pgp_num 64 last_change 81 flags hashpspool,selfmanaged_snaps stripe_width 0 application rbdremoved_snaps [1~3]pool 3 '.rgw.root' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 8 pgp_num 8 last_change 84 owner 18446744073709551615 flags hashpspool stripe_width 0 application rgwpool 4 'default.rgw.control' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 8 pgp_num 8 last_change 87 owner 18446744073709551615 flags hashpspool stripe_width 0 application rgwpool 5 'default.rgw.meta' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 8 pgp_num 8 last_change 89 owner 18446744073709551615 flags hashpspool stripe_width 0 application rgwpool 6 'default.rgw.log' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 8 pgp_num 8 last_change 91 owner 18446744073709551615 flags hashpspool stripe_width 0 application rgwpool 7 'cephfs-metadatpool' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 64 pgp_num 64 last_change 99 flags hashpspool stripe_width 0 application cephfspool 8 'cephfs-datapool' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 99 flags hashpspool stripe_width 0 application cephfspool 9 'reppool' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 32 pgp_num 32 last_change 126 flags hashpspool stripe_width 0pool 10 'erasurepool' erasure size 3 min_size 2 crush_rule 1 object_hash rjenkins pg_num 32 pgp_num 32 last_change 130 flags hashpspool stripe_width 8192[cephadm@ceph-admin ~]$

经验总结扩展阅读

- 阴阳师剧情收录系统有什么功能

- 台式电脑怎么装系统

- 有没有像系统之乡土懒人的小说

- 怎么制作系统u盘win7

- 分布式存储系统之Ceph集群存储池、PG 与 CRUSH

- 苹果ios14.7新功能_苹果ios14.7系统怎么样

- centos7系统资源限制整理

- 引擎之旅 Chapter.4 日志系统

- 电脑被锁怎么重装系统xp

- 分布式存储系统之Ceph集群状态获取及ceph配置文件说明